The contemporary digital landscape has fundamentally transformed the discipline of website development. Historically, the creation of a digital presence was largely treated as an exercise in graphic design and basic informational broadcasting, functioning as little more than a static, electronic brochure. Today, that paradigm is entirely obsolete. A modern website is a highly complex, algorithmic entity that must simultaneously interface with human cognitive behaviors and the extraordinarily rigid, unforgiving parsing parameters of machine-learning search crawlers. At the absolute center of this intersection lies Search Engine Optimization (SEO).

To define SEO merely as a marketing tactic is a severe mischaracterization. In the current era, SEO is the foundational architectural blueprint upon which all digital development must be based. For local businesses attempting to secure visibility in saturated geographic markets, navigating this ecosystem is notoriously difficult. The rules governing search visibility are multifaceted, occasionally opaque, and subject to continuous, automated algorithmic refinement. A singular development misstep-such as a misplaced canonical tag, an unoptimized JavaScript payload, or a subtle, single-character misalignment in business address formatting-can precipitate catastrophic drops in organic traffic, algorithmic suspensions, and subsequent revenue collapse.

The sheer complexity of adhering to these rules requires an advanced, multidimensional conceptual framework. To fully grasp the severity, depth, and operational reality of modern SEO website development, it is necessary to analyze the discipline through three distinct yet tightly interlocking paradigms. First, it must be understood as an uncompromising, rigorous technical engineering methodology for building software and structuring code. Second, it can be visualized as a complex, highly gamified point system where developers and digital strategists continuously vie for maximum algorithmic scores against competing domains. Finally, it must be recognized as mandatory compliance within a heavily regulated market, where search engines operate as natural monopolies dictating the absolute macroeconomic rules of engagement for digital real estate.

Through these three analytical lenses, the subsequent report will exhaustively dissect the profound intricacies of SEO-friendly code, hyper-local search optimization, and the uncompromising legal, technical, and algorithmic frameworks that govern the internet today.

Paradigm I: SEO as an Uncompromising Technical Engineering Discipline

The absolute foundational requirement of any digital presence is the underlying codebase. Before a search engine can rank a website, it must be able to discover, crawl, render, and comprehend the digital document. The concept of “SEO-friendly code” refers to the meticulous practice of structuring HTML, Cascading Style Sheets (CSS), and JavaScript (JS) to maximize this machine comprehension, rendering efficiency, and semantic clarity. Search engines deploy automated bots-spiders-that must download and parse a web page precisely as a human user would experience it. When developers obscure resources, bloat the Document Object Model (DOM), or utilize non-semantic, purely stylistic tags, they fundamentally disrupt the crawler’s ability to interpret the value and relevance of the page.

The Ontology of Semantic Code Architecture

Clean, organized, and semantically pure HTML structure is the absolute bedrock of this technical methodology. Modern developers are mandated to utilize semantic HTML5 tags such as <header>, <section>, <article>, <aside>, and <footer>. These tags transcend mere visual formatting; they provide critical ontological context to the machine. An <article> tag, for instance, signals to the parsing algorithm that the enclosed text is a self-contained, syndicatable piece of content, whereas a <nav> tag explicitly delineates the navigational architecture of the domain. By utilizing these tags, developers build a logical map that allows the search engine to understand the relationship between different blocks of text.

Furthermore, the hierarchical deployment of heading tags is a strict, non-negotiable architectural rule. A page must possess exactly one <h1> tag to serve as the definitive thematic declaration of the document, summarizing the core entity the page represents. This primary heading must be followed sequentially by <h2> and <h3> tags to map out subtopics in a strictly logical outline. Deviating from this structure-such as deploying multiple <h1> tags across a single page, or skipping heading levels (e.g., jumping from an <h1> directly to an <h4>) for the sake of visual font sizing-destroys the scannability of the document. This failure confounds screen readers used for accessibility and severely disrupts the algorithmic parser’s ability to determine the primary topic of the page.

The optimization of meta elements embedded within the <head> of the HTML document requires equal, microscopic precision. Title tags must fall within a strict 50 to 60-character limit to prevent visual truncation in the Search Engine Results Pages (SERPs) while naturally incorporating primary semantic entities and location modifiers without resorting to the heavily penalized practice of keyword stuffing. Meta descriptions, though historically demoted from being a direct ranking factor, continue to serve as the primary conversion mechanisms on the SERP, requiring compelling, intent-driven copy within a tight 150 to 160-character constraint. The failure to implement these elements accurately, or the generation of duplicate meta tags dynamically duplicated across thousands of pages via a poorly configured Content Management System (CMS), introduces severe semantic ambiguity into the search index. This confusion routinely results in algorithmically enforced demotions, as the search engine cannot determine which URL is the canonical source of truth.

Performance Optimization, Rendering Pipelines, and Mobile-First Indexing

Beyond semantic structure, site speed optimization has transitioned from a recommended best practice to a primary, merciless algorithmic gatekeeper. Search engines, specifically Google, prioritize fast-loading domains because computational latency directly correlates with high user bounce rates and degraded user experiences. Consequently, developers must execute aggressive minification protocols at the server level, systematically stripping HTML, CSS, and JavaScript files of all unnecessary whitespace, developer comments, and redundant characters to reduce payload size.

The architectural pattern of a site must also prioritize the intelligent delivery of rendering resources. Developers must confront the critical issue of render-blocking scripts-JavaScript files that instruct the browser to pause the parsing of the HTML DOM until the script is fully downloaded, parsed, and executed. To pass Core Web Vitals assessments, these scripts must be meticulously shifted to the bottom of the DOM, loaded asynchronously (async), or deferred (defer) entirely until the initial paint of the webpage is complete. Simultaneously, the implementation of “lazy loading” for multimedia assets is mandatory. This technique ensures that heavy resources, such as high-resolution images and embedded videos, are only requested from the server when the user scrolls and the asset enters the active viewport, drastically reducing initial load times.

These stringent performance metrics are exponentially amplified by the current reality of mobile-first indexing. Google and other major search engines no longer evaluate the desktop version of a website for their primary indices; they exclusively crawl and evaluate the mobile DOM. Consequently, the codebase must support highly responsive design utilizing flexible layouts, fluid CSS grids, and scalable typography. If a desktop site features rich, highly relevant content that is hidden, truncated, or locked behind non-functional touch elements on the mobile version, that content functionally ceases to exist in the eyes of the search engine.

Media Indexing and Semantic Accessibility

Visual media represents a massive secondary channel for local search visibility, yet it is routinely mismanaged at the development level. Images must not only be compressed into next-generation formats (such as WebP or AVIF) to reduce file weight, but developers are strictly required to manually code descriptive alt text attributes into every image tag.

Alt text serves an indispensable dual purpose. First, it provides critical accessibility context for screen-reading software utilized by visually impaired users, describing the visual data aloud. Second, because algorithmic crawlers lack human vision, the alt text acts as the literal translation of the image for the machine, allowing the search engine to “see” the image and index it appropriately for topical image search queries. The omission of alt text is considered a critical technical failure in modern web development, resulting in the total loss of potential image search traffic and signaling to the algorithm that the website is exclusionary and non-compliant with basic web accessibility standards.

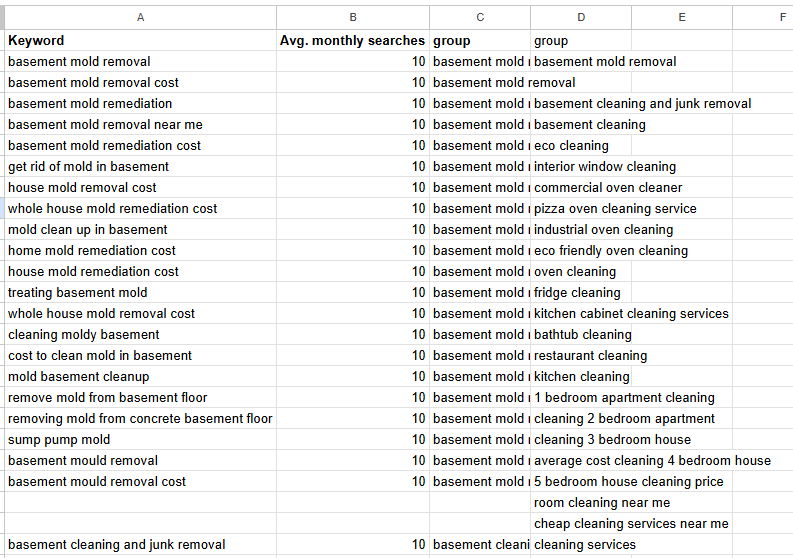

Architecting the Ultimate Local Contact Page: The Data Gathering Node

To understand how technical engineering intersects directly with local SEO ranking algorithms, one must thoroughly examine the anatomy of a local business contact page. Historically, developers and business owners viewed the contact page as an afterthought-a simple digital dead-end containing a basic Name, Address, and Phone number (NAP) string and a generic, often unstyled submission form. Modern SEO requires viewing this page through an entirely different paradigm: it is a primary algorithmic asset and a critical data-gathering node for search engines.

Insights from former heads of Google Business Profile Support confirm that Google’s crawlers are specifically engineered to parse contact pages to harvest localized data and establish entity prominence. When a business provides only a sparse contact form, they are actively denying the search engine the trust signals it desperately seeks, severely capping their local ranking potential. To engineer the perfect local business contact page built specifically for Google’s parsing mechanisms and user conversions, developers must integrate six mandatory pillars of information architecture into the page’s HTML:

- Business Identity: The page must boldly establish the entity’s brand. This goes beyond a simple logo; it requires clear, explicit statements of the company’s legal name, its primary service categories, and its historical context within the community.

- Complete Contact Information: The NAP data must be comprehensive and meticulously formatted. It must feature a highly specific address hierarchy, local (not toll-free) functional phone numbers, and professional, domain-based email addresses.

- Trust Factors and Social Proof: The contact page must act as a nexus of credibility. Developers should dynamically embed authentic, verifiable social proof, including real-time feeds of local reviews, industry accreditation badges, and links to external directory profiles.

- Location-Specific Content: To feed the local algorithm, the page must include deep geographical context. This involves embedding interactive maps, listing specific service areas or neighborhoods covered, providing driving directions from major local landmarks, and detailing local transit routes to the physical storefront.

- Amenities: Search engines crave granular data to serve highly specific user queries. The contact page should explicitly list facility amenities-such as wheelchair accessibility, free parking availability, Wi-Fi access, or specific payment methods accepted.

- A Clear Call-to-Action (CTA) Button: The page must drive a definitive user behavior. Whether it is “Book an Appointment,” “Get a Quote,” or “Call Now,” the CTA must be technically flawless, utilizing fast-loading, bug-free interactive elements that log positive engagement signals.

By treating the contact page not as a passive directory, but as a heavily engineered, data-rich local SEO asset, developers provide the exact semantic signals the algorithm requires to validate the business’s physical existence and geographic relevance.

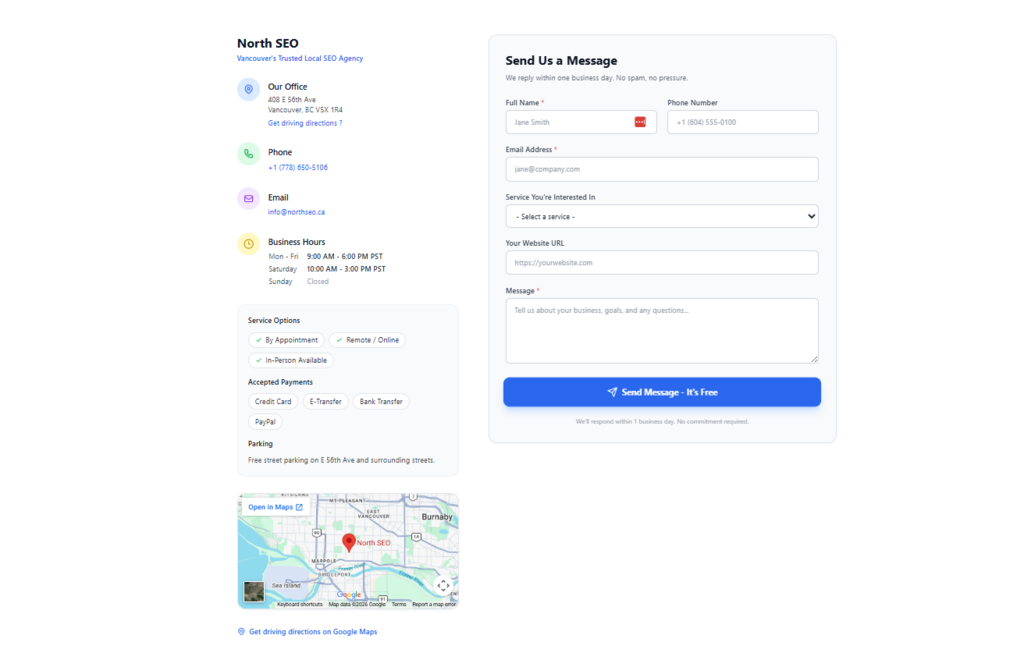

Trust Hubs and Strict Misrepresentation Compliance

The rigor of SEO development extends far beyond performance metrics and contact pages into the high-stakes realm of algorithmic trust and commercial safety. Search engines, particularly within their e-commerce ecosystems and local business platforms (such as Google Merchant Center and Google Business Profiles), aggressively police a concept known as “misrepresentation”. While non-experts often view misrepresentation as a simple marketing or policy issue, achieving compliance is fundamentally a front-end development and deep architectural mandate.

To pass these algorithmic trust checks, a website must be engineered from the ground up as a “Trust Hub.” This requires the hardcoding of specific navigational and informational elements that prove the entity is a legitimate, legally operating business. Developers must ensure the site operates exclusively on HTTPS encryption protocols supported by a valid SSL certificate. Unencrypted data transfer (HTTP) is an immediate, fatal disqualifier for algorithmic trust, often resulting in browser-level warnings that block users from accessing the site.

The header navigation must serve as an anchor of credibility, explicitly linking to transparency pages, including a comprehensive “About Us” section that details business registration details, corporate identity, and operational history. More critically, the footer of the website is subjected to rigorous structural and algorithmic scrutiny. The footer is not merely a repeated design element used to balance the visual weight of the page; it is a site-wide, global anchor for legal compliance. Development specifications must dictate that the footer contains clearly labeled, direct links to comprehensive Privacy Policies, Shipping and Fulfillment details, Return and Refund Policies, Terms of Service, and explicit Customer Support channels.

The formatting of the business contact information within this footer must follow a rigid, universally recognizable schema: Street name, House number, City, State/Province, Zip/Postal Code, and Country, accompanied by a live, professional domain-based email address (e.g., [email protected], rather than a generic Gmail address) and a functional business phone number. Any deviation from this formatting, or the presence of broken internal links, dead external buttons, and unhandled 404 error pages, acts as a massive negative signal that triggers automated misrepresentation suspensions.

Furthermore, developers must implement continuous integration and automated testing to guarantee that the site’s checkout stability is flawless, ensuring that all payment gateways execute securely without anomalous, unauthorized redirects. The backend configuration must maintain absolute, 100% data parity with the frontend website. If a shipping policy stated in the HTML paragraph text differs by a single day from the backend XML feed submitted to the search engine, the entity risks complete, automated de-indexing for deceptive practices.

Paradigm II: The Algorithmic Point System and Gamified Visibility

To fully comprehend the immense difficulty of satisfying these sprawling technical, semantic, and trust-based parameters, it is highly effective to conceptualize SEO as a complex, invisible, and highly gamified point system. In this operational model, the search engine’s algorithm acts as the ultimate, omniscient arbiter, maintaining a hidden, dynamic scorecard for every single URL on the internet. A newly published website begins with a baseline score of zero. Every subsequent architectural decision, piece of content published, technical modification, or community engagement metric either accrues points toward visibility or triggers severe deductive penalties.

Accruing Points Through Technical and Local Architecture

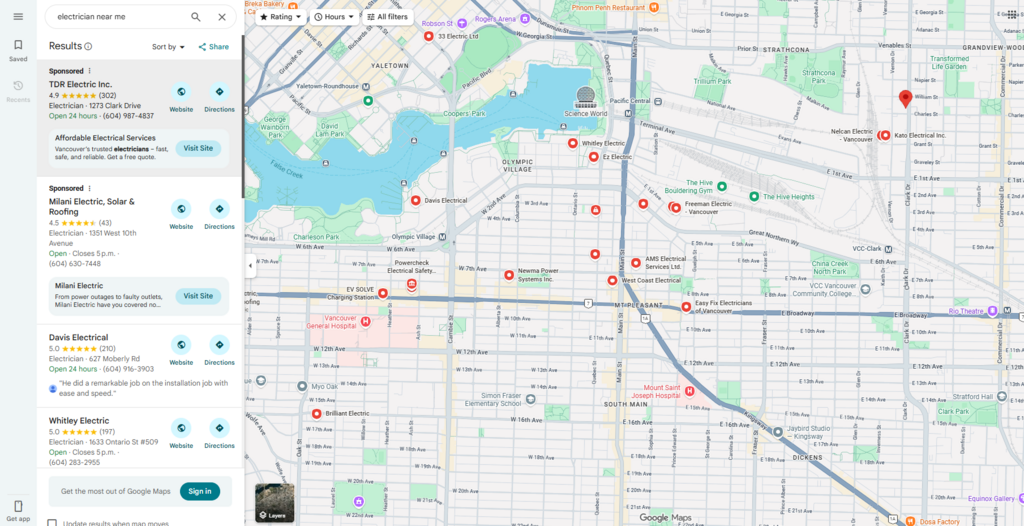

The acquisition of points in the SEO game is achieved through the meticulous, sequential satisfaction of local and technical audit checklists. For local businesses, establishing a verified, fully optimized Google Business Profile (GBP) is the functional equivalent of creating a character in a massive multiplayer game-it is the absolute prerequisite for entering the local, map-based search results arena.

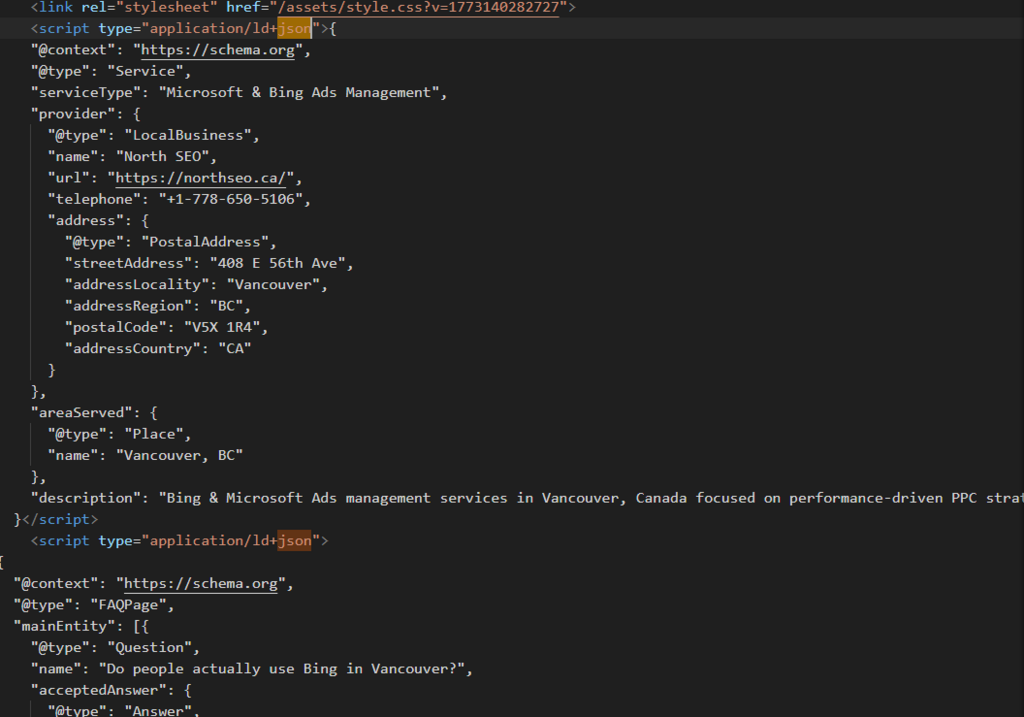

Within the codebase itself, points are heavily weighted toward absolute semantic clarity. Implementing Schema Markup (structured data) generates some of the highest potential algorithmic rewards available to a developer. Schema is a highly specialized vocabulary of microdata-most commonly formatted as JavaScript Object Notation for Linked Data (JSON-LD)-that developers carefully inject into the <head> of a document. It circumvents the need for the algorithm to “guess” what the page is about by explicitly translating the text into machine-readable, standardized entities.

When a developer wraps a local business address, precise operating hours, customer review aggregates, and upcoming event dates in JSON-LD schema markup, they bypass natural language processing ambiguities and directly hand the search engine the exact, structured data points required. This highly technical action yields massive visibility bonuses, often resulting in the search engine generating “Rich Snippets” on the SERP-such as vibrant review star ratings, interactive event carousels, or bolded FAQ accordions directly within the search results.

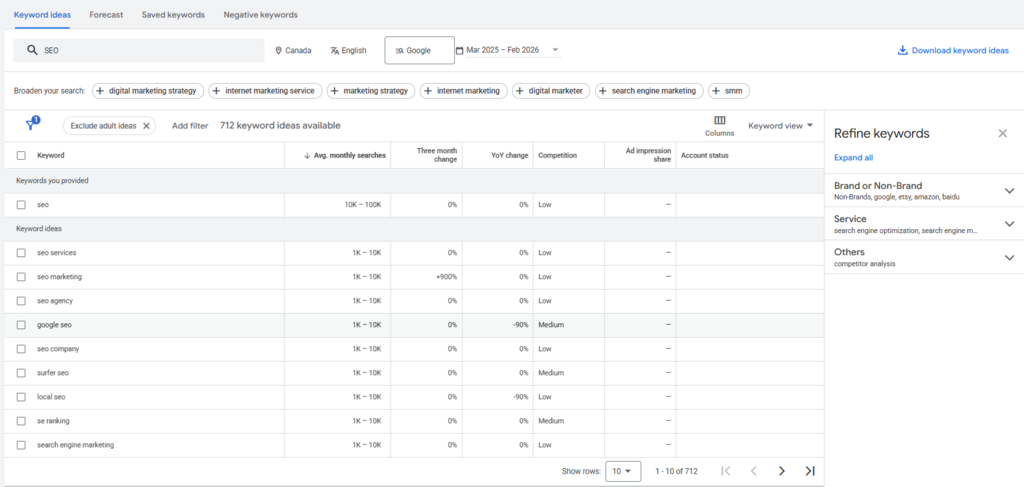

The point system also aggressively awards scores for the strategic acquisition of hyper-local relevance. Generating generic, broad-scope content yields rapidly diminishing returns in the modern algorithm. However, creating highly specific, locality-driven landing pages creates highly valued relevance vectors. For example, a retail business operating in a major metropolitan area accrues significantly more points by developing content specifically targeting micro-neighborhoods or distinct commercial corridors rather than relying on broad, city-level targeting. The point system evaluates the semantic proximity of these location markers against the physical geocoordinates of the user’s search intent.

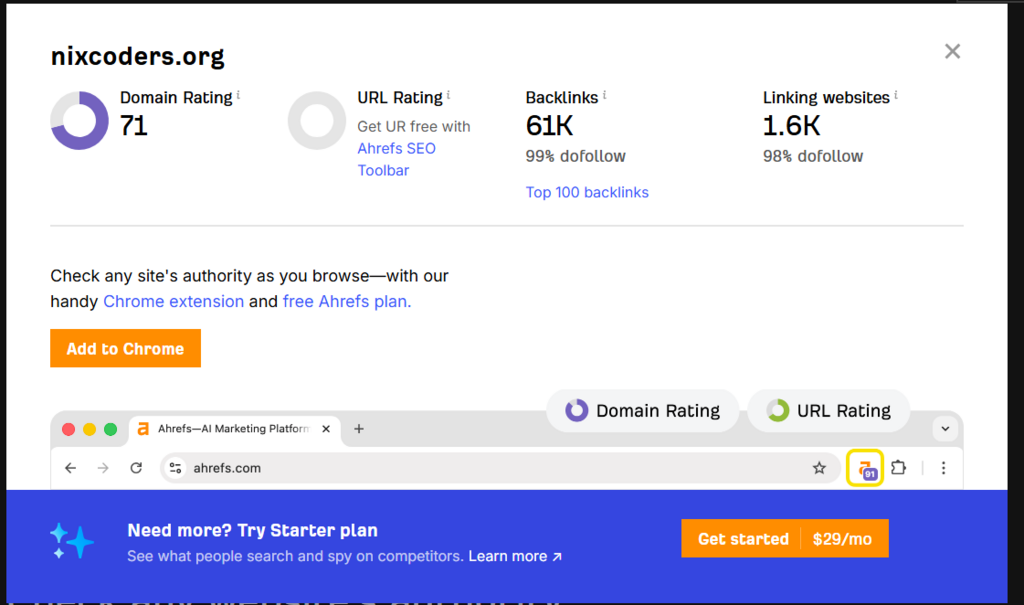

Similarly, the acquisition of high-quality local backlinks (hyperlinks from other geographically relevant, authoritative websites) and the establishment of consistent local citations (mentions of the business NAP across prominent regional business directories) act as algorithmic votes of confidence. Each external validation steadily increases the domain’s overall authority score, pushing it higher up the local rankings leaderboard.

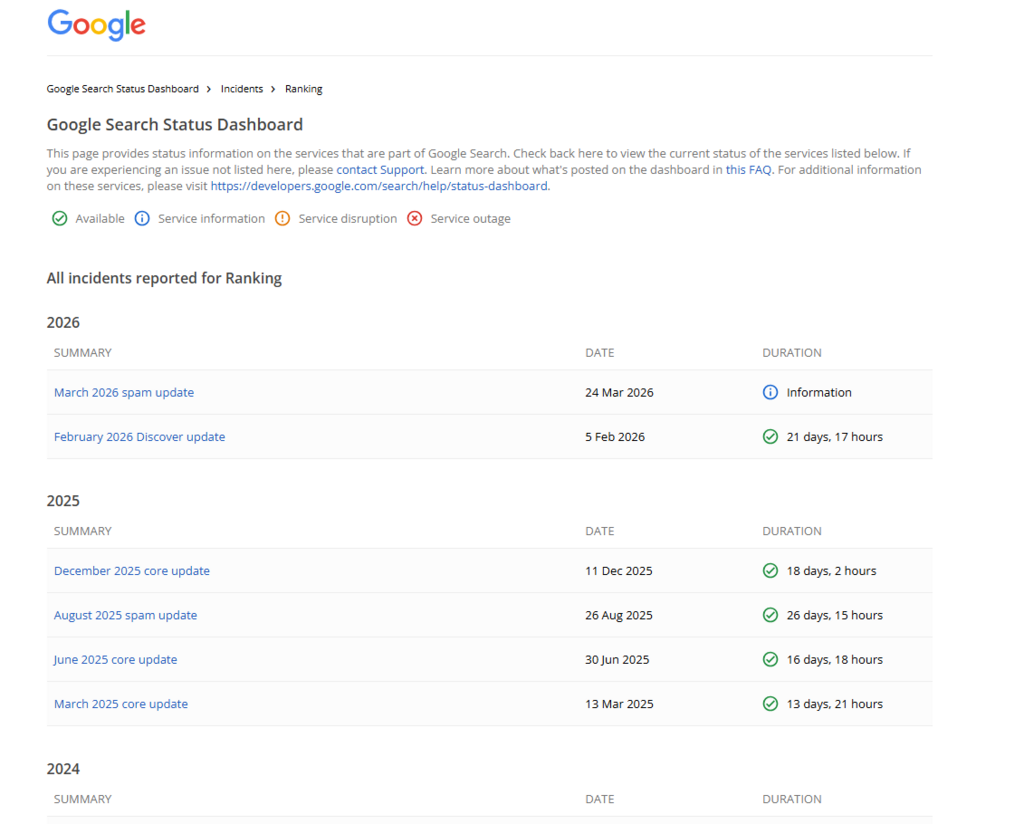

Deductions, Algorithmic Penalties, and Disqualification

Conversely, the SEO game features strict, uncompromising rules where violations result in immediate, devastating point deductions or, in severe cases, outright algorithmic disqualification. The foundational guidelines explicitly outlaw antiquated, manipulative tactics. Keyword stuffing-the unnatural, excessive, and forced repetition of target phrases within the prose or hidden within the code-violates core spam policies and results in severe algorithmic demotion. As search algorithms have evolved to utilize advanced Natural Language Processing (NLP) models, they can easily detect, isolate, and penalize prose that is synthetically engineered for machines rather than organically written for human comprehension.

Technical failures incur equally heavy deductions. Missing canonical tags (<link rel=”canonical”>) lead to duplicate content confusion. If a CMS dynamically generates three different URLs for the exact same piece of content, the algorithm is forced to guess which version to rank, thereby splitting and diluting the potential score across multiple pages. Excessive DOM size, render-blocking JavaScript, uncompressed imagery, and the failure to provide a seamless, responsive mobile experience result in substantial subtractions from the Core Web Vitals score. A poor technical score can effectively neutralize any positive gains a business may have made through high-quality content creation or backlink acquisition.

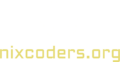

To formalize this concept, the following table conceptualizes the modern Local SEO audit as a gamified scoring matrix, demonstrating how specific development implementations translate directly into algorithmic impacts and penalty risks:

| Optimization Category | Development Action / Implementation Strategy | Conceptual Algorithmic Point Impact | Risk Profile & Penalty Vector |

| Technical Architecture | Deployment of Valid Semantic HTML5 (Single H1, ordered H2/H3 cascade). | High Positive Accumulation | Low Risk |

| Technical Architecture | Serving uncompressed legacy image formats and omitting alt text attributes. | Steady Negative Deduction | High Risk (Accessibility violation & Indexing failure) 3 |

| Technical Architecture | Deployment of Render-blocking JS and heavy inline CSS styling. | Severe Negative Deduction | High Risk (Core Web Vitals failure; mobile demotion) 3 |

| Trust & Compliance | Perfect NAP consistency across frontend rendering and backend footer architecture. | High Positive Accumulation | Low Risk |

| Trust & Compliance | Presence of broken links, 404s, or mismatched shipping/return policies. | Immediate Negative Deduction | Severe Risk (Automated Misrepresentation Suspension) 4 |

| Local Authority | Verified Google Business Profile with active, continuous review management. | Maximum Positive Accumulation | Low Risk 13 |

| Local Authority | Injection of error-free JSON-LD Local Business Schema Markup in the <head>. | High Positive Accumulation | Low Risk (Enables Rich Snippet eligibility) 1 |

| Content Strategy | Creation of hyper-local neighborhood targeting pages (e.g., Kitsilano, Yaletown). | High Positive Accumulation | Low Risk (Aligns with user proximity intent) 14 |

| Content Strategy | Keyword Stuffing, hidden CSS text (white text on white background), or scraped content. | Maximum Negative Deduction | Fatal Risk (Manual Action / Total Domain De-indexing) 1 |

The Meta-Game: User Engagement and UX Gamification

The point system extends far beyond the developer’s direct control over the codebase and deeply into the unpredictable realm of human behavioral psychology. Search engines increasingly monitor real-time local engagement, dwell time, click-through rates, and post-click behavioral signals to determine ultimate relevance. If a user clicks a search result, lands on the page, and immediately returns to the search engine (a behavior known as a “bounce”), the algorithm deduces that the result was highly unsatisfactory, resulting in a point deduction. Conversely, if the user stays, reads, interacts, and clicks deeply into the site’s architecture, the site accrues massive engagement points, validating its high position on the SERP.

To win this highly competitive layer of the meta-game, digital businesses actively deploy gamification strategies directly onto their own websites. Gamification taps into fundamental psychological levers: anticipation, dopamine release, and variable reward systems. By incorporating point scoring, tiered loyalty programs, interactive badges, and visual progress tracking for users, businesses drastically increase the time spent on the site.

For example, massive retail and cosmetic brands utilize interactive challenges where users earn digital points for completing mundane tasks, such as utilizing in-store shade-matching tools, leaving reviews, or signing up for mobile SMS alerts. This gamified experience results in massive, verifiable spikes in active community participation. When a local business transforms standard interactions-such as leaving a localized product review, sharing community-focused content, or completing a volunteer task-into a gamified quest, they drastically reduce bounce rates. This feeds incredibly potent, positive behavioral data back to the search engine algorithm, essentially forcing the algorithm to award the site higher rankings in hyper-competitive “near me” search results.

Paradigm III: The Search Engine as a Dominant Market Regulator

While the gamified point system provides an excellent tactical framework for day-to-day execution, the overarching strategic reality is much more imposing and systemic. To understand the true, underlying difficulty of SEO, one must view the search engine not merely as a helpful software tool, but as an aggressive, dominant market regulator, a natural monopoly, and a de facto public utility. Google, possessing an overwhelming, near-total market share in global internet discovery, dictates the macroeconomic conditions of the entire digital economy.

The Economics of the Digital Grocery Store

The brutal economics of search visibility can be accurately modeled using the analogy of a massive, monopolistic grocery store chain. In the physical retail market, dominant grocery chains hold total, unyielding authority over shelf space allocation. Manufacturers of physical goods compete fiercely for this space, often forced to pay exorbitant “slotting allowances” or slotting fees to secure prime, eye-level placement for their goods. If a manufacturer refuses or cannot afford to play by the grocer’s rules, their product is banished to the bottom shelf, or hidden in a dusty back aisle. For the consumer, a product on the bottom shelf effectively ceases to exist.

In the digital economy, Google is the sole owner of the grocery store, the shelves, and the entire cataloging system. A website is merely a product seeking placement. Another apt metaphor is that of a massive library. If a book (website) is not physically in the library, or if it is cataloged improperly due to incorrect dynamic URLs or missing server redirects, the librarian (the search algorithm) cannot serve it to the patron. SEO developers are essentially packaging engineers, forced to mold their digital products into the exact dimensions, weight limits, and labeling requirements dictated by the store owner to secure visibility.

The “Search Essentials” published by search engines operate as the explicit statutory regulations of this digital market. They are not mere suggestions or helpful tips; they are the uncompromising compliance codes required to hold a license to do business online. Practices defined as “spam” by the regulator-such as covert link schemes, IP cloaking, or scraped content-are the exact equivalent of placing counterfeit or dangerous goods on the store shelves. When these violations are detected by the algorithmic enforcers, the regulator executes a manual penalty, effectively blacklisting and liquidating the vendor from the market entirely.

Antitrust, Data Dominance, and Self-Preferencing

The regulatory power of search engines has reached such unprecedented, monopolistic levels that geopolitical legal frameworks have been forced to intervene. Groundbreaking legislation like the European Digital Markets Act (DMA) and ongoing, historic federal antitrust litigation in the United States have formally classified these platforms as digital “gatekeepers”. The central legal tension in these international courts revolves around massive data infrastructure dominance and the illegal practice of “self-preferencing”.

Modern search markets rely fundamentally on massive, continuous user data aggregation to refine their machine-learning algorithms. The dominant player holds a nearly insurmountable, self-perpetuating data advantage. Furthermore, regulators have heavily penalized search engines for self-preferencing-the act of a vertically integrated monopoly artificially boosting its own adjacent, proprietary products (e.g., its own shopping comparison units, flight aggregators, or proprietary local map packs) to the absolute top of the search results, thereby artificially demoting and starving independent competitors of traffic. Recent legal mandates and antitrust rulings have explored extreme remedies, such as forcing dominant search engines to share specific, anonymized data snapshots with rival search indexes in an attempt to simulate a fair, contestable market.

For the SEO developer and local business owner, these macroeconomic antitrust battles have immediate, painful microeconomic consequences. Traditional organic search results (the historical “ten blue links”) are continually pushed further and further down the page, buried beneath the search engine’s proprietary features, ads, and local map packs.

The Regulatory Tax of AI and Zero-Click Searches

The most profound regulatory shift in recent digital history is the rapid rise of the “zero-click search.” Historically, the search engine acted as a transit hub, cataloging information and routing human traffic outward to external, independent websites. Today, through advanced Search Generative Experiences (SGE) powered by Large Language Models (LLMs), the search engine attempts to answer complex user queries directly on the SERP by parsing and synthesizing information extracted from the open web.

This mechanism acts as a brutal regulatory tax on digital businesses. The platform extracts the informational value and labor from the developer’s website, synthesizes it, and presents it to the user. The user is satisfied immediately and has absolutely no incentive to click through to the original source website.

To survive in this heavily taxed, enclosed environment, developers and SEO specialists must fundamentally adapt their strategies. They must pivot toward creating conversational, highly specific, and deeply authoritative content that secures placement inside the generative AI answers themselves. They must aggressively optimize for Google Business Profiles, invest heavily in local service ads, and utilize schema-driven knowledge panels to ensure their brand visibility remains intact even when traditional organic traffic inevitably diminishes. The fundamental rules of the market have shifted: the goal is no longer solely maximizing outbound clicks, but maximizing sheer, unavoidable brand presence within the monopoly’s enclosed, generative ecosystem.

Advanced Hyper-Local Complexity: The Vancouver Application

The absolute synthesis of technical engineering rigor, algorithmic gamification, and strict market regulation is best observed through a specific, highly competitive geographic lens. The digital market in Vancouver, British Columbia, presents a highly advanced, fiercely contested microcosm of these macro SEO trends, serving as an ideal case study for the extreme difficulty of the discipline. To successfully navigate this fiercely contested digital landscape-from structuring localized content to engineering the perfect contact page for conversions-businesses often rely on the expertise of local Vancouver SEO agencies and marketing experts. They help to navigate clients in the google ranking system and make SEO promotion as easy as possible for the local market.

Precision Neighborhood Targeting in Saturated Markets

By the year 2025, deploying generic, city-wide targeting in a densely populated and highly digitalized metropolitan area like Vancouver is functionally obsolete and mathematically ineffective. The sheer volume of hyper-local “near me” searches, driven almost entirely by mobile devices and voice-activated AI assistants, has surged exponentially. A consumer situated in the Kitsilano neighborhood searching for an emergency service or artisan coffee expects immediate, hyper-proximate results. They are not interested in a business located across the city in East Vancouver, regardless of that business’s overall domain authority.

To compete in this environment, developers must engineer highly complex site architectures that support granular, hyper-local SEO. This involves the programmatic creation of distinct, localized landing pages that target specific micro-neighborhoods, individual streets, and even busy commercial transit corridors. The content strategy must shift away from broad declarations (e.g., “Vancouver Plumber”) toward hyper-specific, intent-driven assets (e.g., “Emergency Plumber on Commercial Drive”). This level of granularity requires embedding hyperlocal parameters dynamically into URL structures, H1 tags, meta descriptions, and contextual prose. Crucially, this must be done with surgical precision to ensure the content reads naturally, avoiding the algorithmic penalties associated with geographic keyword stuffing.

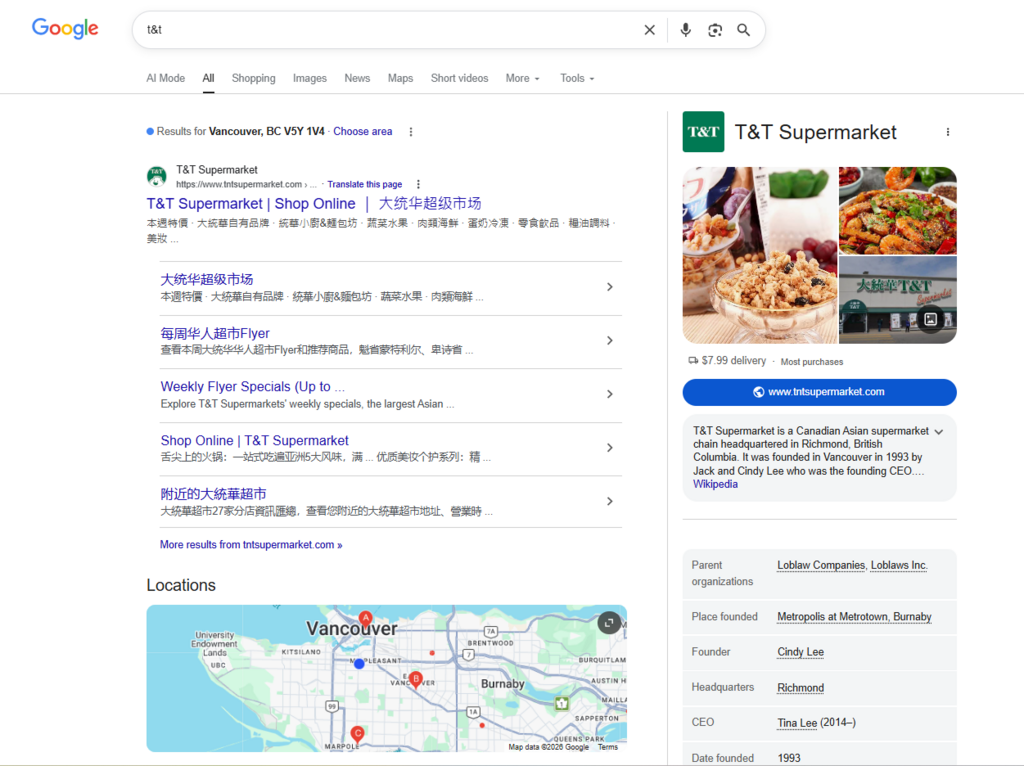

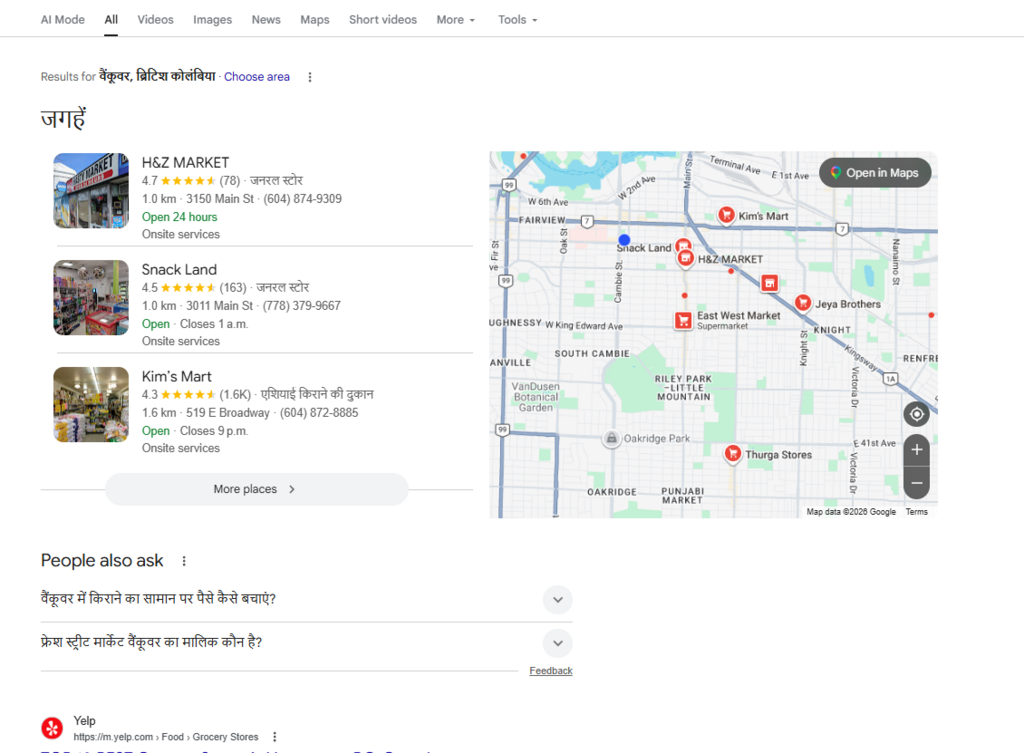

Multilingual SEO Architecture and Linguistic Compliance

Furthermore, the Vancouver market is defined by its profound multiculturalism and linguistic diversity. A massive segment of the local consumer base conducts daily, high-intent searches in Cantonese, Mandarin, Tagalog, Punjabi, and French. A monolingual English website operating in this specific market automatically forfeits a massive percentage of the available digital footprint and potential revenue.

Implementing a true multilingual SEO architecture is an exceptionally tricky, highly technical developmental challenge. It is entirely insufficient, and often algorithmically dangerous, to simply rely on automated, client-side translation plugins. Developers must architect a robust, dual-strategy server implementation that handles both local geographic proximity signals and complex internationalized language formatting simultaneously.

This requires the flawless, error-free execution of hreflang attributes within the HTML <head>. The hreflang tag is a piece of code that explicitly instructs the search engine algorithm on the specific linguistic and geographical targeting of a URL. It prevents the algorithm from mistakenly flagging translated pages as penalized duplicate content. For example, the code must differentiate between a page meant for English speakers in Canada (en-ca) versus French speakers in Canada (fr-ca). The backend server infrastructure must be resilient enough to serve this localized content dynamically based on the user’s browser language preferences and IP geocoordinates, blending the extreme demands of international SEO best practices with hyper-local mapping optimization.

The Intersect of Civic Regulation and Algorithmic Rules

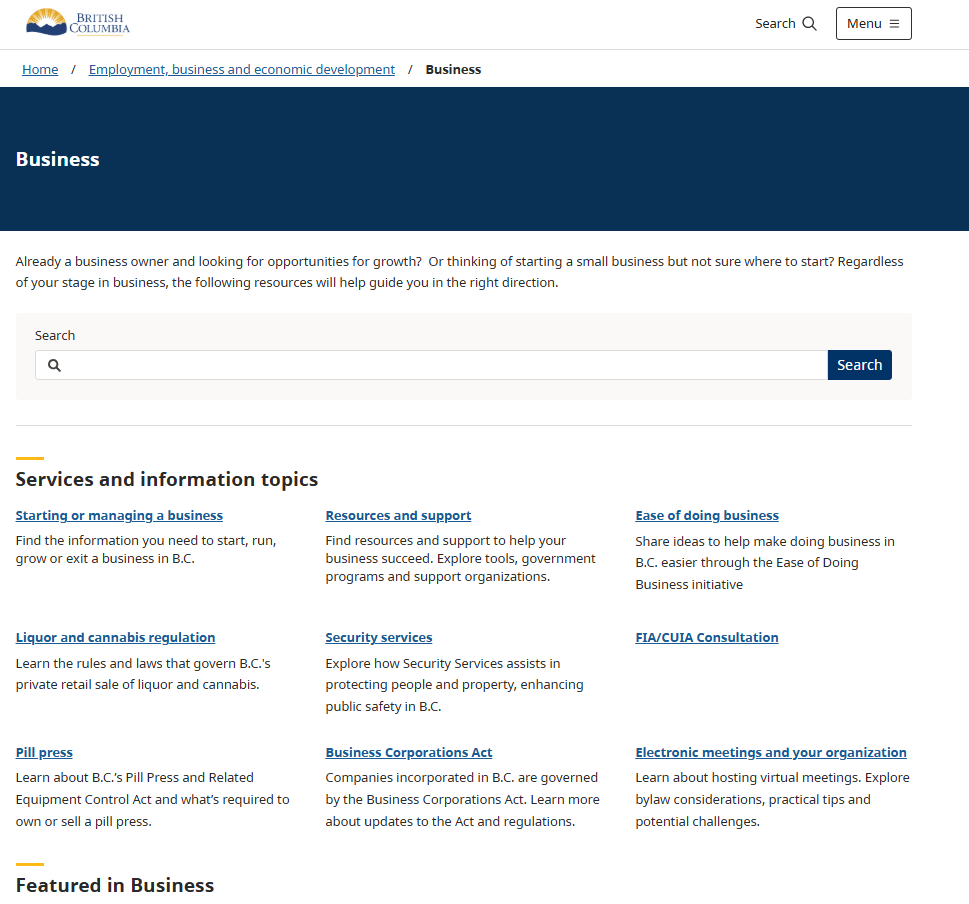

The regulatory environment in regions like Vancouver also introduces direct, unavoidable legislative mandates that intersect violently with SEO development. The Accessible British Columbia Act (ABCA) demands, by law, that regulated organizations and businesses operating within the province identify and eliminate barriers for individuals with disabilities.

In the digital realm, this legislation legally binds web development to the strict, internationally recognized standards of the Web Content Accessibility Guidelines (WCAG). Within the modern digital ecosystem, SEO and accessibility are inextricably linked; they are two sides of the same algorithmic coin. The technical requirements dictated by the ABCA-such as ensuring flawless screen-reader compatibility, establishing a logical, visually clear focus order for users navigating via keyboards, utilizing high-contrast visual design, and writing comprehensive alt text for all media-are the exact same technical metrics that search algorithms use to evaluate baseline site quality and semantic structure.

Furthermore, the ABCA requires businesses to establish explicit public feedback tools and detailed, multi-year accessibility plans. From a development standpoint, these feedback mechanisms must be integrated securely into the site’s contact hubs and footer architecture. This aligns perfectly with the misrepresentation and trust guidelines mandated by Google Merchant Center policies discussed previously. Failure to implement these accessibility features not only exposes a business to severe legal jeopardy and civic reputational damage but also results in systematic, continuous demotion within the algorithmic point system, as search engines increasingly penalize exclusionary, non-compliant codebases.

To crystallize these complex, intersecting requirements, the following table synthesizes the specific technical, strategic, and regulatory requirements necessary for competing in an advanced local SEO landscape like Vancouver:

| Local SEO Imperative | Technical Development Requirement | Strategic & Algorithmic Outcome |

| Hyper-Local Targeting | Dynamic, programmatic creation of neighborhood-specific URLs and structured content modules (e.g., /vancouver/kitsilano-services). | Captures lucrative, long-tail, high-intent “near me” voice and mobile search traffic by matching exact geographic intent. |

| Multilingual Architecture | Server-side implementation of exact hreflang tags; routing without infinite auto-redirect loops. | Ranks accurately across distinct language SERPs (English, Mandarin, etc.) while totally avoiding duplicate content algorithmic penalties. |

| Voice Search Optimization | Integration of natural language FAQ schemas (JSON-LD) explicitly addressing conversational, long-tail queries. | Secures vital visibility in Zero-Click search environments, Voice Assistant answers, and AI overviews. |

| ABCA Legal Compliance (Accessibility) | Strict adherence to WCAG standards; ARIA label integration for dynamic elements; mandatory descriptive alt-text; keyboard-only navigability. | Prevents legal friction, maximizes algorithmic trust scores, and secures high Core Web Vitals grading. |

| Review Gamification | Developing interactive, gamified feedback loops and loyalty triggers post-purchase via API integrations. | Generates continuous, user-driven local content, reduces bounce rates, and feeds positive behavioral signals to the algorithm. |

Strategic Synthesis and Final Conclusions

The discipline of SEO and website development has evolved far beyond the realm of simple marketing tweaks or basic aesthetic web design. As exhaustively demonstrated through the analysis of highly specific coding standards, psychological gamification models, and imposing macroeconomic market regulations, it is a deeply complex, highly technical engineering endeavor fraught with peril.

The extreme difficulty in following the rules stems directly from their multidimensional, intersecting nature. A developer must write code that is incredibly lightweight, minified, and semantically pure to satisfy the mechanical, computational demands of a spider crawling the web. Simultaneously, that same developer must ensure the site’s architecture projects absolute, unassailable commercial trustworthiness, displaying physical addresses, secure payment gateways, and highly transparent legal policies in exact formats to avoid automated algorithmic suspension and misrepresentation penalties.

When visualized as a gamified point system, the fragility of the digital ecosystem becomes painfully apparent. A business can invest thousands of hours and massive capital into generating hyper-local content and gamifying their user experience to accrue maximum engagement points. However, that massive score can be instantly decimated by a single technical failure-such as an expired SSL certificate, a poorly executed hreflang tag triggering duplicate content filters, or a heavy, render-blocking JavaScript library that destroys mobile performance. In modern SEO, every single node in the Document Object Model is simultaneously a potential point of catastrophic failure and a vector for competitive optimization.

Ultimately, the overarching strategic reality is dictated by the search engine acting as a dominant market regulator. Google’s Search Essentials are the de facto antitrust and commercial laws of the digital realm. They, and they alone, dictate how lucrative digital “shelf space” is allocated among competing businesses. As the market shifts violently toward AI-driven zero-click searches-where the regulator answers user queries directly on the SERP to the extreme detriment of outbound organic traffic-the rules of the game have become significantly harder and more restrictive.

To survive and thrive in this environment-particularly in hyper-competitive, multicultural, and strictly regulated locales-businesses must completely abandon superficial, antiquated SEO tactics. They must embrace SEO as a fundamental, non-negotiable architectural principle. They must weave complex schema markup, rigorous accessibility compliance, microscopic local targeting, and flawless technical performance into the very bedrock of their digital operations. Only by mastering technical engineering, playing the gamified algorithm flawlessly, and yielding completely to the market’s regulatory demands can a digital entity hope to achieve and maintain enduring visibility.